How to get started with AI in HR

You've probably read the headlines. Your CEO has asked about your AI strategy. A few vendors have pitched you platforms built on "AI-native architecture." Your team has tried ChatGPT for a handful of tasks. And somewhere in all of that, you're still not sure where to actually begin.

Getting started with AI in HR means choosing one high-impact use case, assessing whether your data and team can support it, running a measurable pilot, and building internal alignment so the pilot survives long enough to produce results. It does not mean launching a transformation, replacing your HRIS, or committing to an AI-first operating model.

The gap between AI's promise and the average HR team's ability to capture value is wide, and the path through it isn't obvious. But you don't need to close that gap in one move. Getting started with AI in HR is less about launching a transformation than about choosing one problem, pairing it with the right tool, and learning what the technology can and can't do inside your own workflows.

This guide walks through how to make that first move without overcommitting, overpaying, or overpromising.

Why getting started with AI in HR feels difficult

Most HR leaders are caught between two kinds of pressure. From above, there's an expectation to have an AI plan. From the market, there's a steady stream of vendors claiming their platform is the answer. From inside the team, there's skepticism — people have been burned by tools that promised more than they delivered, and they've seen enough hype cycles to know that "AI-powered" doesn't always mean "useful."

Eighty-eight percent of HR leaders say their organizations haven't realized significant business value from AI tools, according to a Gartner survey. A broader BCG study found that 74% of companies across industries have yet to show tangible value from their AI investments, with only 26% developing the capabilities to move beyond proofs of concept. And among HR teams that aren't using AI at all, 67% cite lack of awareness of AI's capabilities as their top reason — they don't know where to start or how it could help.

It's not that AI doesn't work, but that starting well matters more than starting broadly. You don't need a full transformation to capture value. You need a focused entry point: one use case, one pilot, one early win that proves the model before you scale.

What "getting started" actually means — and what it doesn't

What it means

It means identifying one or two use cases where AI can realistically make work faster or better. It means honestly assessing whether your data, tools, and team are ready. It means running a small pilot with measurable outcomes. And it means building enough internal alignment that the pilot doesn't get shut down by politics before it has a chance to produce results.

That's it. The goal at this stage isn't to overhaul anything. It's to build a business case for doing more.

The good news is that you're not starting alone. SHRM's 2025 Talent Trends research found that 43% of organizations worldwide used AI for HR and recruiting tasks in 2025, up from 26% the year before. Recruiting is the leading HR practice area for AI, ahead of HR technology, learning and development, and employee experience.

What it doesn't mean

Getting started doesn't mean launching a company-wide AI transformation. It doesn't mean replacing your ATS, HRIS, or performance platform with something newer. It doesn't mean training a proprietary language model on your employee data. And it doesn't mean committing to an AI-first operating model before you've shipped a single pilot.

Each of those might be on the horizon at some point. None of them belong in a first move.

For full implementation steps, see How to Implement AI in HR: A Practical Guide.

Step 1: Identify high-impact, low-risk use cases

The first decision is the most important one. If you pick the wrong use case, everything downstream gets harder. ROI is unclear, adoption slows, skeptics gain ammunition. If you pick the right one, momentum builds on its own.

Where AI delivers fast value in HR

The highest-leverage early use cases tend to cluster in recruiting and talent acquisition. That's not coincidence. TA is high-volume, metric-rich, and full of repetitive work, which is exactly the conditions under which AI can measurably reduce effort without introducing new risk.

McKinsey estimates that 20% of generative AI's total value potential in HR sits in talent acquisition, recruiting, and onboarding — the single largest HR sub-function for AI impact. Their broader analysis suggests AI could reduce time spent on administrative HR work by 60 to 70%, freeing teams for work that requires judgment.

In practical terms, early use cases tend to look like:

- Candidate sourcing and talent discovery: Using AI to find strong candidates beyond the limits of keyword search

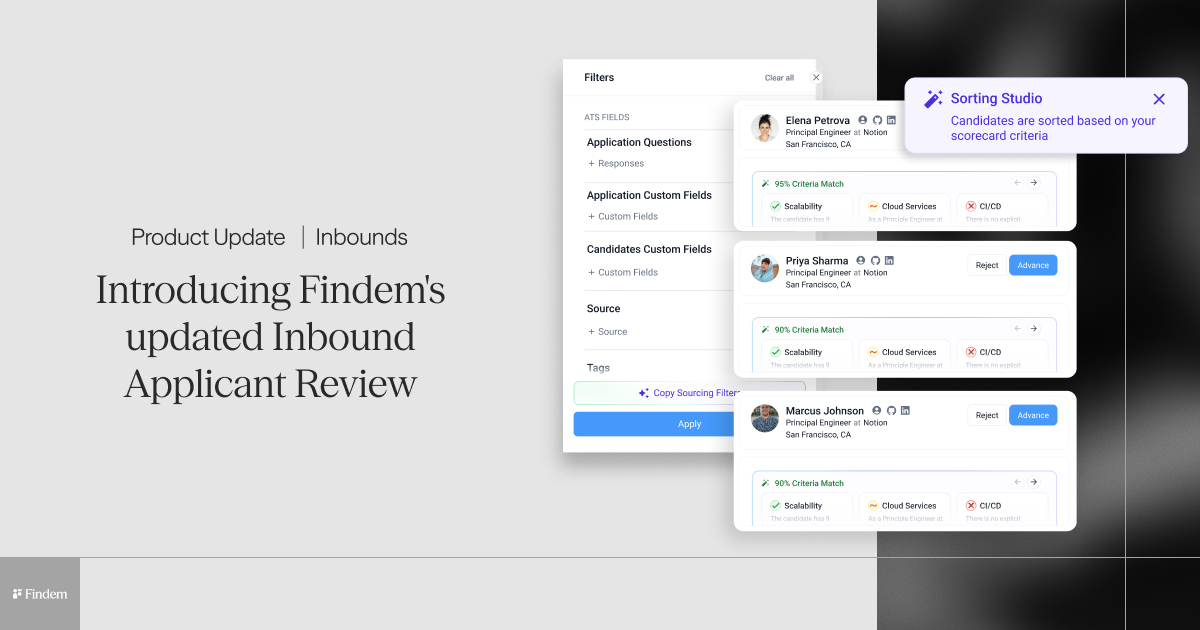

- Resume screening augmentation: Using AI to triage inbound applications without making final decisions

- Talent rediscovery: Surfacing candidates already in the ATS or CRM who fit current roles

- Skills-based matching: Evaluating candidates on attributes and experience rather than title overlap

- Pipeline insights: Identifying gaps, patterns, or signal problems in existing pipelines

Characteristics of a good first use case

Whatever use case you pick, it should share these traits. The work is high volume and repetitive, so time savings compound. Success is measurable, so you can define a baseline and compare against it. The regulatory surface area is manageable. The underlying data already exists in some usable form. And recruiters, hiring managers, or HR partners are already frustrated with how the work gets done today.

That last one matters more than people think. A use case tied to an actual operational pain point will get adopted. A use case built on a theoretical benefit rarely does.

Step 2: Assess your AI readiness

Tools are the easy part. The harder part is whether your data, your organization, and your processes are ready to support an AI pilot in the first place. BCG's research is emphatic on this point: 70% of AI implementation challenges stem from people and process issues, not technology. Only 20% are technology problems, and 10% are about the algorithms themselves.

A quick readiness check across three dimensions will save you months later.

Data readiness

AI output is only as good as the data it works from. Before a pilot, look honestly at whether your candidate data is structured or scattered across fields, whether your ATS and CRM are actually connected, and whether the data inside them is reasonably clean. You don't need perfection. You do need enough structure that an AI tool can work from context rather than noise.

Organizational readiness

Does your leadership team actively support AI work, or tolerate it? Is your HR team open to changing workflows, or protective of them? Is there a baseline understanding of what AI can and can't do? And is IT willing to collaborate on integration and governance, or will every request turn into a six-week ticket?

These aren't yes-or-no questions. They're directional. A pilot can succeed with a lukewarm organization if expectations are calibrated accordingly.

Process readiness

AI doesn't fix broken processes. It accelerates them. If the workflow you're applying AI to is already unclear — ownership is fuzzy, KPIs are loose, handoffs are informal — AI will make those problems faster, not better. Before running a pilot, make sure the underlying process has defined steps, measurable outcomes, and a clear owner.

Step 3: Build internal alignment

Pilots usually fail because nobody agreed on what success would look like, or because someone important wasn't in the room when the decision got made. Spending a few weeks on alignment before a pilot starts is almost always worth it.

Key stakeholders

Depending on your use case, you'll likely need some combination of HR leadership, talent acquisition, IT, legal and compliance, and — in some regions — works councils or employee representatives. The goal is to make sure the right people can weigh in before the pilot launches, not after.

Securing buy-in

The fastest way to lose a skeptical stakeholder is to open with AI. The fastest way to earn their support is to open with a business problem they already care about. Lead with the operational pain — time-to-fill, candidate drop-off, recruiter overload — and position AI as one possible path to addressing it. Use concrete quick-win examples where possible. And be explicit about what AI will and won't do: it supports human judgment, it doesn't replace it.

That framing matters for employees, too. SHRM's 2026 research found that AI is 5.7 times more likely to shift job responsibilities than to displace jobs, and only 7% of HR professionals report job displacement from AI. That's a useful data point to have on hand when the conversation turns anxious.

Candidates watch this conversation closely, too. 79% of candidates want to know exactly how AI is used in the hiring process, but only 37% trust AI to select qualified applicants. Building transparency into your rollout isn't just an internal alignment question, it's a candidate experience question.

Many organizations find that sourcing workflows are a natural first place to build alignment, because the wins show up fast and the risks are well-understood.

Step 4: Choose the right tools to start

Tool selection is where a lot of first-time AI projects go sideways. The market is full of platforms that claim the same things, use the same language, and benchmark themselves against the same metrics. Separating signal from noise requires a clearer evaluation lens.

What to look for

For a first pilot, prioritize five things: integration with the systems you already use; usability for recruiters and HR teammates, not just admins; transparent AI outputs you can inspect and explain; a strong underlying data foundation; and real compliance readiness.

On that last point: 57% of HR professionals in US states with AI employment regulations aren't aware those laws exist — a gap that's only going to widen as regulation expands. Lawsuits alleging AI hiring discrimination are rising, and in the closely watched Mobley v. Workday case, a federal court granted preliminary class certification for applicants alleging AI screening tools produced disparate impact based on race, age, and disability.

Due diligence on bias auditing and transparency is no longer optional.

Why data-first platforms matter

Most AI tools in HR are only as good as the data they have access to. That sounds obvious, but it's the thing most teams overlook during tool selection.

Many tools work from thin inputs like job titles, keywords, parsed resume text. That's why the results so often feel underwhelming: the AI is doing its job, but it's working from a narrow view of each candidate. A platform built on structured, verified talent data can evaluate candidates on the attributes, experiences, and signals that actually predict fit, rather than surface-level title overlap.

Skills-based matching, attribute-based search, talent rediscovery across the ATS and CRM, and explainable outputs all depend on the same foundation: structured data with enough context to support real judgment. When you evaluate vendors, ask where their data comes from, how it's maintained, and what the system can tell you about why it surfaced any given candidate. The answer is often the clearest way to tell a serious platform from a thin one.

Step 5: Run a pilot project

Pilots are how you turn a hypothesis into evidence. Done well, a pilot produces data you can defend, a workflow your team can adopt, and a clearer view of what to build next. Done poorly, it produces anecdotes and disagreement.

How to structure a pilot

Pick one team with a real problem to solve — a recruiting pod working on a specific function, for example, or a talent ops lead trying to improve CRM hygiene. Define baseline metrics before anything else: time-to-fill, response rate, pipeline conversion, recruiter hours per req, whatever matters for the use case. Run the pilot in a controlled way, with clear start and end dates. And compare results against the baseline you captured, not against gut feel.

What success looks like

Good pilot outcomes tend to show up in a few places. Sourcing gets faster. Candidate quality — measured by interview rates, offer acceptance, or hiring manager feedback — improves. Recruiter efficiency goes up, with less time spent on manual triage and more on relationship-building.

The gains are real when the pilot is designed well. Companies using AI-powered recruitment tools have reported 33 to 50% reductions in time-to-hire, 30% reductions in cost-per-hire, and 31% increases in quality of hire, according to DemandSage's aggregated recruitment data. Results vary by context — yours may be more modest in a first pilot — but the direction is consistent.

One important caveat: 56% of organizations don't formally measure the success of their AI investments at all, according to SHRM. Don't be one of them. A pilot you can't measure isn't a pilot; it's a vibe.

Step 6: Learn, iterate, and expand

A successful pilot is the beginning of the work, not the end.

Evaluate results

Look honestly at three questions. Did the outcomes actually improve, measured against baseline? Was adoption strong — did the team use the tool the way you expected, or did they route around it? And did the pilot surface any risks, bias concerns, compliance gaps, or workflow issues that need to be addressed before scaling?

Decide next steps

If the pilot worked, there are three broad directions from here. You can deepen the use case by applying it to more roles, teams, or geographies. You can add adjacent workflows: if sourcing worked, try screening or nurture next. Or you can move toward a more structured implementation, with the governance and tooling needed to scale.

One thing going in your favor: employees generally want this work to succeed. Gartner's research found that 65% of employees say they are excited to use AI at work, and 77% take training when offered. The appetite is there. What usually limits progress is structure and guidance, not enthusiasm.

Scaling is covered in Enterprise Applications of AI in HR.

Common mistakes when getting started with AI in HR

- Trying to do too much too soon: Broad AI initiatives without a clear first use case tend to collapse under their own complexity.

- Choosing tools before defining use cases: Vendor demos are convincing. They're also a bad way to pick the problem you're trying to solve.

- Ignoring data quality: AI doesn't compensate for bad inputs. A readiness check on data is not optional.

- Skipping alignment: Pilots that surprise legal, IT, or leadership rarely survive.

- Expecting immediate transformation: The value of a first pilot is learning, not overhaul. Momentum builds, it doesn't just arrive.

Getting started checklist

Before launching your first AI pilot, make sure you can check off each of these:

- Identified one specific use case with a clear operational pain point

- Defined baseline metrics and success criteria

- Assessed data, organizational, and process readiness

- Aligned key stakeholders, including IT and compliance

- Chosen a tool evaluated on integration, usability, transparency, data foundation, and compliance

- Designed a pilot with clear scope, duration, and measurement plan

- Established how you'll evaluate results and decide next steps

If you can't check all seven, you're not ready to launch. Close the gaps first.

Start small, think big with AI in HR

AI in HR doesn't reward perfection. It rewards early wins that build momentum. The teams that capture the most value aren't the ones with the biggest AI budgets or the most ambitious roadmaps. They're the ones that pick a focused problem, pair it with a tool that fits, and prove the model before they scale it.

BCG found that AI leaders follow a 10-20-70 rule — putting 10% of their resources into algorithms, 20% into technology and data, and 70% into people and processes. They also pursue half as many AI opportunities as their peers but scale twice as many. The lesson in both cases is the same: discipline beats ambition, especially at the start.

One factor that consistently accelerates early wins is the quality of the data the AI is working from. Platforms built on structured, verified talent data give you a stronger shot at meaningful pilot outcomes — and a cleaner path to scale when those pilots succeed. Findem is one of the ways teams make that first move, particularly in sourcing and talent discovery, where the return on a well-designed pilot tends to show up fastest.

Wherever you start, start focused. One use case, one pilot, one defensible result. That's what getting started actually looks like.

.svg)